原创:扣钉日记(微信公众号ID:codelogs),欢迎分享,转载请保留出处。

简介

最近我们系统出现了一些奇怪的现象,系统每隔几个星期会在大半夜重启一次,分析过程花费了很长时间,令人印象深刻,故在此记录一下。

第一次排查

由于重启后,进程现场信息都丢失了,所以这个问题非常难以排查,像常规的jstack、jmap、arthas等都派不上用场,能用得上的只有机器监控数据与日志。

在看机器监控时,发现重启时间点的CPU、磁盘io使用都会升高,但很快我们就确认了这个信息无任何帮助,因为jvm启动会由于类加载、GIT编译等导致CPU、磁盘io升高,所以这个升高是重启本身导致的,并不是导致问题的原因。

然后就是分析业务日志、内核日志,经过一段时间分析,结论如下:

在重启的时间点,系统流量并没有明显上升。在dmesg日志中并没有找到oom-killer的痕迹,虽然这经常是导致进程莫名重启的原因。由于部署平台也可能会kill掉进程(当进程不响应健康检查请求时),我们也怀疑是它们的误杀,但运维同学认为不可能误杀。问题没有任何进一步的进展了,由于没有任何进程现场,为了排查问题,我开发了一个脚本,主要逻辑就是监控CPU、内存使用率,达到一个阈值后自动收集jstack、jmap信息,脚本部署之后就没继续排查了。

第二次排查

部署了脚本之后,过了几个小时,进程又重启了,但这次不是在大半夜,而是白天,又开始了排查的过程...

在这次排查过程中,我突然发现之前漏掉了对gc日志的检查,我赶紧打开gc日志看了起来,发现了下面这种输出:

Heap after GC invocations=15036 (full 0): garbage-first heap total 10485760K, used 1457203K [0x0000000540800000, 0x0000000540c05000, 0x00000007c0800000) region size 4096K, 9 young (36864K), 9 survivors (36864K) Metaspace used 185408K, capacity 211096K, committed 214016K, reserved 1236992K class space used 21493K, capacity 25808K, committed 26368K, reserved 1048576K} [Times: user=0.15 sys=0.04, real=0.06 secs] 2021-03-06T09:49:25.564+0800: 914773.820: Total time for which application threads were stopped: 0.0585911 seconds, Stopping threads took: 0.0001795 seconds2021-03-06T09:49:25.564+0800: 914773.820: [GC concurrent-string-deduplication, 7304.0B->3080.0B(4224.0B), avg 52.0%, 0.0000975 secs]2021-03-06T09:50:31.544+0800: 914839.800: Total time for which application threads were stopped: 18.9777012 seconds, Stopping threads took: 18.9721235 seconds啥,这代表什么意思,jvm暂停了18秒?但看上面那次gc只花了0.06秒呀!

不知道application threads were stopped: 18.9777012 seconds这个日志的具体含义,只好去网上搜索了,结论如下:

这行日志确实代表了jvm暂停了18秒,即常说的STW。之所以会有这行日志,是因为有-XX:+PrintGCApplicationStoppedTime -XX:+PrintGCDetails这两个jvm参数。这个18秒并不是由gc造成的,在jvm中除了gc,还有safepoint机制会让jvm进入STW,比如jstack、jmap等操作就会触发safepoint机制,使得所有线程进入safepoint等待操作完成。一般来说,如果是gc造成的STW,会在上面有[Times: user=0.15 sys=0.04, real=0.06 secs]这样一行,所以你看上面那个gc造成的STW实际是0.0585911秒,四舍五入成了0.06秒。什么是safepoint机制

简单来说,就是JVM在做某些特殊操作时,必须要所有线程都暂停起来,所以设计了safepoint这个机制,当JVM做这些特殊操作时(如Full GC、jstack、jmap等),会让所有线程都进入安全点阻塞住,待这些操作执行完成后,线程才可恢复运行。并且,jvm会在如下位置埋下safepoint,这是线程有机会停下来的地方:

方法调用返回处会埋safepoint非counted loop(非有界循环),即while(true)死循环这种,每次循环回跳之前会埋safepoint有界循环,如循环变量是long类型,有safepoint,循环变量是int类型,需要添加-XX:+UseCountedLoopSafepoints才有safepoint经过一段时间的排查与思考,确认了这次STW是我自己开发的脚本导致的!因为随着jvm运行时间越来越长,老年代使用率会越来越高,但会在Full GC后降下来,而我的脚本直接检测老年代占用大于90%就jmap,导致触发了jvm的safepoint机制使所有线程需等待jmap完成,导致进程不响应请求,进而部署平台kill了进程。

其实脚本监控逻辑应该是在Full GC后,发现内存占用还是很高,才算内存异常case。

在了解到safepoint这个知识点后,在网上搜索了大量文章,主要提到了5组jvm参数,如下:

# 打开safepoint日志,日志会输出到jvm进程的标准输出里-XX:+PrintSafepointStatistics -XX:PrintSafepointStatisticsCount=1 # 当有线程进入Safepoint超过2000毫秒时,会认为进入Safepoint超时了,这时会打印哪些线程没有进入Safepoint-XX:+SafepointTimeout -XX:SafepointTimeoutDelay=2000 # 没有这个选项,JIT编译器可能会优化掉for循环处的safepoint,那么直到循环结束线程才能进入safepoint,而加了这个参数后,每次for循环都能进入safepoint了,建议加上此选项-XX:+UseCountedLoopSafepoints # 在高并发应用中,偏向锁并不能带来性能提升,反而会触发很多没必要的Safepoint,建议加上此选项关闭偏向锁-XX:-UseBiasedLocking# 避免jvm定时进入safepoint,就如safepoint中的no vm operation操作就是jvm定时触发的safepoint-XX:+UnlockDiagnosticVMOptions -XX:GuaranteedSafepointInterval=0注:默认情况下,jvm将safepoint日志加到标准输出流里,由于我们使用的resin服务器,它有watchdog机制,导致safepoint日志写到了watchdog进程的${RESIN_HOME}/log/jvm-app-0.log中。

并且我发现网上有很多关于-XX:+UseCountedLoopSafepoints与-XX:-UseBiasedLocking导致长时间STW的问题案例,我当时几乎都觉得我加上这2个参数后,问题就解决了。

于是我并没有进一步去优化监控脚本,而是下掉了它,直接加上了这些jvm参数。

safepoint日志格式

加入以上jvm参数后,立即查看safepoint日志,格式如下:

$ less jvm-app-0.log vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count24.692: ForceSafepoint [ 77 0 1 ] [ 0 0 0 0 0 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count25.607: G1IncCollectionPause [ 77 0 0 ] [ 0 0 0 0 418 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count26.947: Deoptimize [ 77 0 0 ] [ 0 0 0 0 1 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count27.136: ForceSafepoint [ 77 0 1 ] [ 0 0 0 0 0 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count28.137: no vm operation [ 77 0 1 ] [ 0 0 0 0 0 ] 0其中:

第一列是当前打印日志时间点,它是相对于进程启动后经过的秒数。第二列是触发safepoint机制的操作,比如G1IncCollectionPause,看名称就知道是G1GC操作导致的暂停。第三列是当前jvm线程情况total:STW发生时,当前jvm的线程数量initially_running :STW发生时,仍在运行的线程数,这项是Spin阶段的时间来源wait_to_block : STW需要阻塞的线程数目,这项是block阶段的时间来源第四列是safepoint各阶段的耗时Spin:因为jvm在决定进入全局safepoint的时候,有的线程在安全点上,而有的线程不在安全点上,这个阶段是等待未在安全点上的线程进入安全点的时间。Block:即使进入safepoint,后进入safepoint的部分线程可能仍然是running状态,这是等待它们阻塞起来花费的时间。Sync:等于Spin + Block + 其它活动耗时,gc的STW日志最后的Stopping threads took等于Spin + Block。Cleanup:这个阶段是JVM做的一些内部的清理工作。vmop:实际safepoint操作花费的时间,如G1IncCollectionPause(GC暂停),Deoptimize(代码反优化),RevokeBias(偏向锁撤销),PrintThreads(jstack),GC_HeapInspection(jmap -histo),HeapDumper(jmap -dump)。第三次排查

过了几个星期后,问题又出现了,接下来就是检查gc与safepoint日志了,一看日志发现,果然有很长时间的STW,且不是gc造成的,如下:

首先查看gc日志,发现有超过16s的STW,并且不是gc造成的,如下:# 发现有16s的STW$ less gc-*.logHeap after GC invocations=1 (full 0): garbage-first heap total 10485760K, used 21971K [0x0000000540800000, 0x0000000540c05000, 0x00000007c0800000) region size 4096K, 6 young (24576K), 6 survivors (24576K) Metaspace used 25664K, capacity 26034K, committed 26496K, reserved 1073152K class space used 3506K, capacity 3651K, committed 3712K, reserved 1048576K} [Times: user=0.13 sys=0.02, real=0.04 secs] 2021-04-02T00:00:00.192+0800: 384896.192: Total time for which application threads were stopped: 0.0423070 seconds, Stopping threads took: 0.0000684 seconds2021-04-02T00:00:00.193+0800: 384896.193: Total time for which application threads were stopped: 0.0006532 seconds, Stopping threads took: 0.0000582 seconds2021-04-02T00:00:00.193+0800: 384896.193: Total time for which application threads were stopped: 0.0007572 seconds, Stopping threads took: 0.0000582 seconds2021-04-02T00:00:00.194+0800: 384896.194: Total time for which application threads were stopped: 0.0006226 seconds, Stopping threads took: 0.0000665 seconds2021-04-02T00:00:00.318+0800: 384896.318: Total time for which application threads were stopped: 0.1240032 seconds, Stopping threads took: 0.0000535 seconds2021-04-02T00:00:00.442+0800: 384896.442: Total time for which application threads were stopped: 0.1240013 seconds, Stopping threads took: 0.0007532 seconds2021-04-02T00:00:16.544+0800: 384912.544: Total time for which application threads were stopped: 16.1020012 seconds, Stopping threads took: 0.0000465 seconds2021-04-02T13:04:48.545+0800: 384912.545: Total time for which application threads were stopped: 0.0007232 seconds, Stopping threads took: 0.0000462 seconds再查看safepoint日志,发现有16s的safepoint操作,触发事件是HeapWalkOperation,如下:# safepoint日志也发现16s的HeapWalkOperation操作$ less jvm-app-0.log vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count384896.193: FindDeadlocks [ 96 0 0 ] [ 0 0 0 0 0 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count384896.193: ForceSafepoint [ 98 0 0 ] [ 0 0 0 0 0 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count384896.194: ThreadDump [ 98 0 0 ] [ 0 0 0 0 124 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count384896.318: ThreadDump [ 98 0 0 ] [ 0 0 0 0 124 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count384896.442: HeapWalkOperation [ 98 0 0 ] [ 0 0 0 0 16102 ] 0 vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count384912.545: no vm operation [ 98 0 0 ] [ 0 0 0 0 0 ] 0对比两个日志的时间点,发现时间点是吻合的,如下:# 查看进程启动时间点$ ps h -o lstart -C java|xargs -i date -d '{}' +'%F %T'2021-03-28 13:05:03 # watchdog进程2021-03-28 13:05:04 # 这个才是服务进程# 由于safepoint记录的时间点是相对于进程启动的秒数,而HeapWalkOperation对应的秒数是384896.442# 用date给时间加上秒数,减的话用 xx seconds ago$ date -d '2021-03-28 13:05:04 384896 seconds' +'%F %T'2021-04-02 00:00:00可以发现gc日志中STW是2021-04-02T00:00:16,而safepoint中是2021-04-02 00:00:00,刚好差了16s,时间差值刚好等于STW时间,这是由于gc日志记录的是STW发生之后的时间,而safepoint日志记录的是STW发生之前的时间,所以这两个日志时间点是吻合的,16s的STW正是由HeapWalkOperation导致的。

从名称看起来像是在执行堆内存遍历操作,类似jmap那种,但我的脚本已经下掉了呀,不可能还有jmap操作呀,机器上除了我的resin服务器进程,也没有其它的进程了呀!

到这里,已经找到了一部分原因,但不知道是怎么造成的,苦苦寻找根因中...

第N次排查

已经记不得是第几次排查了,反正问题又出现了好几次,但这次咱把根因给找到了,过程如下:

还是如上面过程一样,检查gc日志、safepoint日志,如下:# gc日志中发现14s的STW$ less gc-*.log2021-05-16T00:00:14.634+0800: 324412.634: Total time for which application threads were stopped: 14.1570012 seconds, Stopping threads took: 0.0000672 seconds# safepoint日志中同样有14s的HeapWalkOperation操作$ less jvm-app-0.log vmop [threads: total initially_running wait_to_block] [time: spin block sync cleanup vmop] page_trap_count324398.477: HeapWalkOperation [ 98 0 0 ] [ 0 0 0 0 14157 ] 0现象和之前一模一样,现在的关键还是不知道HeapWalkOperation是由什么原因导致的。

源于最近一直在学习Linux命令,并且正在总结grep的用法,我随手在resin目录递归的grep了一下heap这个词,如下:# -i 忽略大小写搜索# -r 递归的搜索,在当前目录/子目录/子子目录的所有文件中搜索heap# -n 打印出匹配行的行号$ grep -irn heap

我竟意外发现,resin中有HeapDump相关的配置,好像是resin中的一些健康检查的机制。

经过一翻resin官网的学习,确认了resin有各种健康检查机制,比如,每个星期的0点,会生成一份pdf报告,这个报告的数据就来源于类似jstack、jmap这样的操作,只是它是通过调用jdk的某些方法实现的。resin健康检查机制的介绍:http://www.caucho.com/resin-4.0/admin/health-checking.xtp

<health:PdfReport> <path>${resin.root}/doc/admin/pdf-gen.php</path> <report>Summary</report> <period>7D</period> <snapshot/> <mail-to>${email}</mail-to> <mail-from>${email_from}</mail-from> <!-- <profile-time>60s</profile-time> --> <health:IfCron value="0 0 * * 0"/> </health:PdfReport>此机制会在${RESIN_HOME}/log目录下生成pdf报告,如下:

$ ls -l-rw-r--r-- 1 work work 2539860 2021-05-02 00:00:26 app-0-Summary-20210502T0000.pdf-rw-rw-r-- 1 work work 3383712 2021-05-09 00:00:11 app-0-Summary-20210509T0000.pdf-rw-rw-r-- 1 work work 1814296 2021-05-16 00:00:16 app-0-Summary-20210516T0000.pdf由于堆遍历这样的操作,耗时时间完全和当时jvm的内存占用情况有关,内存占用高遍历时间长,占用低则遍历时间短,因此有时暂停时间会触发部署平台kill进程的时间阈值,有时又不会,所以我们的重启现象也不是每周的0点,使得没有注意到0点的这个时间规律。

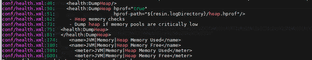

于是我直接找到resin的health.xml,将健康检查相关机制全关闭了,如下:

<health:ActionSequence> <health:IfHealthCritical time="2m"/> <health:FailSafeRestart timeout="10m"/> <health:DumpJmx/> <!-- <health:DumpThreads/> --> <!-- 注释掉这个 --> <health:ScoreboardReport/> <!-- <health:DumpHeap/> --> <!-- 注释掉这个 --> <!-- <health:DumpHeap hprof="true" hprof-path="${resin.logDirectory}/heap.hprof"/> --> <!-- 注释掉这个 --> <health:StartProfiler active-time="2m" wait="true"/> <health:Restart/></health:ActionSequence><health:MemoryTenuredHealthCheck> <enabled>false</enabled> <!-- 添加这个 --> <memory-free-min>1m</memory-free-min></health:MemoryTenuredHealthCheck> <health:MemoryPermGenHealthCheck> <enabled>false</enabled> <!-- 添加这个 --> <memory-free-min>1m</memory-free-min></health:MemoryPermGenHealthCheck> <health:CpuHealthCheck> <enabled>false</enabled> <!-- 添加这个 --> <warning-threshold>95</warning-threshold> <!-- <critical-threshold>99</critical-threshold> --></health:CpuHealthCheck><health:DumpThreads> <health:Or> <health:IfHealthWarning healthCheck="${cpuHealthCheck}" time="2m"/> <!-- <health:IfHealthEvent regexp="caucho.thread"/> --> <!-- 注释掉这个,这个event由<health:AnomalyAnalyzer>定义 --> </health:Or> <health:IfNotRecent time="15m"/></health:DumpThreads><health:JvmDeadlockHealthCheck> <enabled>false</enabled> <!-- 添加这个 --><health:JvmDeadlockHealthCheck/><health:DumpJmx async="true"> <!-- 删除这种节点 --> <health:OnStart/></health:DumpJmx><health:Snapshot> <!-- 删除这种节点 --> <health:OnAbnormalStop/></health:Snapshot><health:AnomalyAnalyzer> <!-- 删除这种节点 --><health:PdfReport> <!-- 删除这种节点 -->这样配置以后,过了2个月,再也没出现重启现象了,确认了问题已解决。

总结

这次问题排查有一定的思路,但最后排查出根因的契机,还是有点像撞大运似的,自己随机grep了一把发现线索,但下次就不知道会不会碰到这种运气了。

后面想了想,这种问题常规的排查思路还是要挂脚本,运行追踪程序perf、bpftrace等,在jvm执行safepoint操作的入口函数加入探针probe,当发现safepoint操作时间超长时,打印出相关jvm原生调用栈与java调用栈即可,关于追踪工具,之前有过这方面的介绍,感兴趣可以看下:

Linux命令拾遗-动态追踪工具

Linux命令拾遗-剖析工具